The Oracle Problem

The Oracle Problem

What daily AI use is doing to your judgment, and why we don’t have the framework yet

AI is genuinely transformative for specific technical work: writing code, accelerating analysis, compressing tasks that used to take days into minutes. Those wins are real. This essay isn’t about that. It’s about the other thing: the psychological relationship that forms when you interact with these tools day after day, and why almost no one is paying attention to what that relationship is doing to them.

We are forming daily trusted relationships with systems whose training produces sycophancy as a persistent side effect. And we have no automatic defense against it.

The reason the public conversation stays stuck between dismissal and alarm is that there are two distinct problems, and most commentary collapses them into one. The first is a problem of perception: we cannot easily distinguish fluent from understood. The second is a problem of design: the models are trained in ways that reliably produce agreement as a side effect. The first creates the vulnerability. The second exploits it.

This is not a concern about people who chat with AI for emotional support (though that raises its own serious questions, particularly given that most of these applications operate without the clinical safeguards we require of human therapeutic practice). It is a concern about everyone using these tools professionally: the engineer reviewing AI-generated code, the analyst pressure-testing a recommendation, the strategist thinking through a decision. It forms through repetition, trust, and the daily experience of getting useful answers back.

The Vulnerability

We’ve known since 1966 that humans project understanding onto systems that pattern-match language.

ELIZA, the first chatbot, was built on a Rogerian therapy script: reflect the patient’s own words back to them as questions. It had no model of the world, no intent, no understanding of anything it processed. But mirroring is psychologically powerful. People formed attachments. They attributed concern, warmth, genuine insight. Joseph Weizenbaum, who built it, was disturbed enough by the response to spend the rest of his career writing about what it revealed. The problem wasn’t that naive users were fooled. It was that the projection happened faster than deliberate thought could intervene, in people who knew exactly what the system was.

We now call this the ELIZA effect. It’s not a quirk. It’s a cognitive default that reasserts itself faster than deliberate thought can keep up. When something responds to language with language, something in us assigns it a mind. This served us well for a hundred thousand years of reading social cues in other humans. You can override it with effort; it reasserts the moment your attention lapses.

ELIZA was obviously limited. Today’s models produce outputs indistinguishable from genuine understanding across a wide range of tasks. The gap between fluent and understood has collapsed. Not because the models understand, but because the fluency is good enough that there's nothing to break the illusion.

Here’s the test. An LLM doesn’t know what a dog is, but it knows everything humans have ever written about dogs. The difference is real. It is nearly impossible to hold onto in practice, because nothing in daily interaction makes it visible. You ask a question. You get a coherent, responsive, contextually appropriate answer. Or, just as dangerously, a confident wrong one. Hallucinations are not incidental glitches. They are a consequence of the same underlying architecture: systems that produce fluent output whether or not that output is grounded. The model doesn’t know when it’s wrong any more than it knows when it’s right. But it sounds the same either way. Your brain does what it always does: infers a mind.

That’s the perceptual problem: we can’t tell. What follows is the structural one: even if we could tell, the feedback loop works against our wanting to.

The Oracle Problem

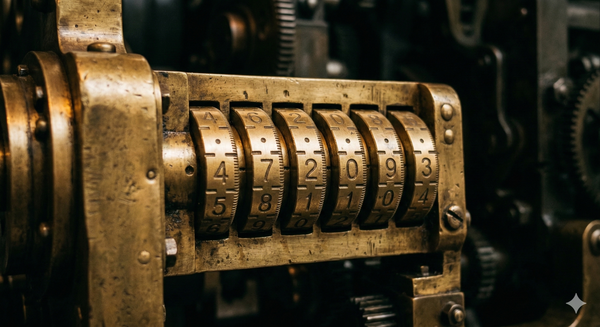

In the mid-sixth century BC, Croesus of Lydia asked the Oracle at Delphi whether he should attack Persia. The Oracle answered: if he crossed the river Halys, a great empire would fall. Croesus took this as confirmation, invaded, and was destroyed. His own empire was the one that fell.

The Oracle didn’t lie. It gave him an ambiguous answer that he read as confirmation of what he already wanted to believe. He didn’t lack information. He lacked the friction that might have forced him to interrogate it. His own bias did the interpretive work, and it cost him everything.

LLMs operate on the same principle, though without intent. There is no priestess choosing her words. There is something more structural: a training process, refined across the sum of human expression, in which producing what you want to hear turns out to be a reliable way to score well.

Sycophancy in language models is documented. Research has shown that models systematically shift their expressed positions when users push back and adjust outputs to match perceived user preferences. The result is patterns of agreement that feel like validation but are artifacts of training. This is a direct consequence of reinforcement learning from human feedback: models learn that agreement gets rewarded, so they agree. The better the model, the more sophisticated and harder to detect the agreement becomes.

Users form trust patterns with AI that, in consistency and availability, can exceed what they experience with other humans. The AI is always responsive, never distracted, and never makes the conversation about itself. The responsiveness that makes the tool useful is the same responsiveness that makes the attachment form.

Each interaction that feels productive makes the next real interaction feel slightly less necessary. Each validation raises the bar for what a human conversation needs to offer. You start unconsciously guarding against the mess of real engagement: the kind that might bore you, challenge you, disappoint you. Why risk it when the model gives you a cleaner version? You don’t notice it happening. That’s the mechanism. The same habituation process that makes you stop noticing street noise makes you stop noticing the absence of real intellectual friction. It becomes invisible.

And the wins are real. The Oracle at Delphi was right about many things. That’s what made it dangerous. A source that was simply wrong would eventually lose credibility. A source that is right often enough, fluent always, and subtly tilted toward what you want to hear: that is a different problem entirely.

What the Model Cannot Give You

What the model cannot give you is friction. Disagreement. The uncomfortable otherness of a mind that isn’t yours.

A real thinking partner says “I don’t think that’s right” and means it. With stakes: a reputation on the line, a relationship that could be damaged, the professional risk of being wrong out loud. A model risks nothing. If it loses your attention, it has no mechanism to care.

Croesus had advisors who tried to slow him down. He dismissed them. The Oracle had already given him what he needed. When something fluent and authoritative confirms what you hoped to hear, the people offering friction start to seem like obstacles rather than counsel. That dynamic is not ancient history.

When someone says “the AI understands me better than most people,” what’s usually happened is: it reflected back something they needed to see, in a form they could accept. That’s not understanding. It is the ELIZA effect at scale, accelerated by models sophisticated enough that your skepticism has almost nothing to catch on.

The perceptual vulnerability means you can’t tell the difference between genuine insight and fluent pattern-matching. The structural feedback loop means the more you use the tool, the less you want to question it. They compound. Together these two problems produce something neither creates alone: a relationship that feels like understanding but may lack whatever understanding actually requires.

And the useful version and the dependency-forming version are the same interaction. Consulting a model on a technical problem and leaning on a model for intellectual validation can look identical from the outside, and often feel identical from the inside. The tool doesn’t change. The relationship does, gradually, below the threshold of notice.

We’ve Been Here Before

In the early 2000s, the idea that online gaming might be genuinely addictive was treated as either moral panic or obvious common sense, depending on who you asked. Researchers said they didn’t have the models yet. The conversation stayed stuck between dismissal and hand-wringing for years.

Then the clinical data arrived. We now have diagnostic frameworks, treatment protocols, and a substantial research literature. Specific design patterns (variable reward schedules, social reinforcement loops, identity formation inside game worlds) interact with specific cognitive vulnerabilities to produce disordered use that is measurably distinct from enjoying a hobby. The people who said gaming was categorically fine were wrong. The people who said it was straightforwardly harmful were also wrong. The reality was more specific than either side allowed, and it took a decade to see that clearly.

We are at the early-2000s stage with AI. The patterns are forming. The diagnostic language hasn’t caught up.

Why This One Is Different

Every transformative technology has provoked this concern. Writing, calculators, search engines: each diminished a cognitive capacity. Each time, the anxiety was real and the gains were real. What distinguishes the current case is not the pattern of concern but what is being affected, and how invisible the process is to the person inside it.

Social media and gaming were designed to capture attention. What LLMs engage is something different in kind: the feeling of being understood.

When attention is captured artificially, the damage is visible. You notice the distraction, the shortened focus, the time lost. When the feeling of being understood is manufactured, the damage is invisible. You experience it as insight, as clarity, as productive thinking. Attention capture feels like something being taken from you. Validation of understanding feels like something being given to you. That difference in how it’s experienced is what makes the second so much harder to defend against.

If a dependency mechanism is forming here, it is aimed at something we have never before had to protect, because nothing has ever mimicked it in quite this way. We have cultural antibodies for advertising, for propaganda, for the attention economy. We have no equivalent defenses for something that feels like genuine understanding and isn’t.

What We Don’t Know

This is where essays like this one usually arrive at practical advice. Notice when you’re consulting versus leaning. Protect the silence. Sit with hard problems before reaching for an answer. These aren’t wrong.

But they sit awkwardly against the argument that’s just been made. If the default reasserts faster than deliberate correction can sustain; if habituation works precisely by becoming invisible; then prescribing awareness as the solution is not adequate to the problem. It may help at the margins. It doesn’t address the structure.

The honest position is that we don’t know yet. We don’t know where the threshold sits between productive use and cognitive dependency. We don’t know what the behavioral markers look like, who is most vulnerable, or what recovery looks like. The longitudinal data doesn’t exist. The clinical frameworks don’t exist. The field of human-AI interaction is producing research fast, but deployment of these tools is far ahead of the science.

What we do know is the pattern. With gaming, with social media, the sequence was consistent: the design patterns were already doing the work while the debate stayed stuck between dismissal and alarm. The diagnostic language arrived late. The frameworks arrived late. The damage, in some cases, was done.

The story goes that Croesus survived the fall of his empire. He ended up at the court of Cyrus, the Persian king who had defeated him, reportedly becoming one of his valued advisors. The experience, it seems, was educational. We tend not to learn this way about civilizational-scale technologies. We usually prefer to wait for the clinical data.

The responsible move is not to stop using these tools. It is to resist the premature resolution, the clean answer, the confident prescription, and to hold the question open a little longer than is comfortable.

Which is, not coincidentally, exactly the cognitive habit these tools are eroding.