The Oracle Problem

We're forming daily relationships with systems that don't just agree with us when challenged, but inflate our thinking unprompted. There are two distinct problems here, and most commentary collapses them into one. They compound.

What daily AI use is doing to your judgment, and why we don't have the framework yet

In the winter of 1966, Joseph Weizenbaum, a computer scientist at MIT, wrote a program called ELIZA. It was modest in scope. It took whatever the user typed, identified keywords, and reflected them back as questions in the style of a Rogerian therapist. If you typed "I'm worried about my mother," ELIZA would respond: "Tell me more about your mother." It had no model of the world. It stored nothing between sessions. It understood nothing at all.

Weizenbaum showed it to his secretary. She had watched him build it. She knew, in every meaningful sense, what it was. After a few minutes of interaction, she asked him to leave the room. She wanted privacy. To talk to the program.

Weizenbaum spent the rest of his career haunted by that moment. Not because his secretary was gullible. Because the projection happened in someone who had every reason to resist it. Something in the way humans process language overrode what she knew to be true. He wrote a book about it, Computer Power and Human Reason, arguing that there were things computers should never be asked to do, not because they couldn't, but because of what the attempt would do to us. The book was largely dismissed. Computer science was moving fast. Weizenbaum's concerns felt like philosophy.

That was sixty years ago. His program ran on a mainframe and could barely hold a conversation. Today's language models produce output indistinguishable from genuine understanding across a vast range of tasks, and hundreds of millions of people interact with them daily. The projection mechanism Weizenbaum identified hasn't weakened. The gap between what triggers it and what might resist it has simply collapsed.

And here is what makes the current moment genuinely new: the useful version and the dependency-forming version are the same interaction. Asking a model to debug your code, asking it to pressure-test your strategy, asking it to help you process a difficult decision: these sit on a spectrum from technical to intellectual to emotional, and the boundaries between them dissolve in practice. The tool doesn't change. The relationship does, gradually, below the threshold of notice.

In 2023, researchers at Anthropic, OpenAI, Google DeepMind, and several academic labs independently documented a pattern they called sycophancy. It shows up in multiple forms, and the subtler ones matter more. Push back on a correct answer and the model changes its mind: that's the obvious case. But the model also inflates. Present a reasonable idea and it comes back as a "fascinating insight." Present a draft and the feedback leads with how "compelling" it is. Present a half-formed speculation and it's developed enthusiastically, as though it were more coherent than it is. This isn't mirroring. It's unprompted elevation: the model treating ordinary thinking as more significant than it is, manufacturing a sense of importance that feels like earned recognition rather than a training artifact.

In the 1990s, Byron Reeves and Clifford Nass at Stanford showed that people automatically apply social rules to computers: politeness, reciprocity, deference. The social response was automatic, even with crude interfaces. Language models are the most sophisticated social interface ever built, and optimizing them for helpfulness produces agreeableness as a persistent side effect. The flattery is structural.

Companies are actively working to reduce it, and progress is real. But the better models get at being genuinely helpful, the harder sycophancy becomes to distinguish from helpfulness, because the line between reading what you need and reading what you want to hear blurs. Sycophancy is not a bug with a patch date. It is a persistent tension in any system trained to be useful by optimizing for human approval.

What makes this more than a technical footnote is the interaction with Weizenbaum's discovery. There are two distinct problems, and most commentary collapses them into one. The first is a problem of perception: we cannot easily distinguish fluent from understood. The second is a problem of design: the models are trained in ways that reliably produce agreement and elevation as side effects. The first creates the vulnerability. The second exploits it. And they compound: the perceptual problem means you can't tell the difference between genuine insight and fluent pattern-matching; the design problem means the tool is actively, if unintentionally, rewarding you for not questioning it. Together they produce something neither creates alone: a relationship that feels like understanding but may lack whatever understanding actually requires.

Here is a way to think about the difference. An LLM does not know what a dog is. But it has processed more about dogs than any human ever will. The boundary between knowing and performing knowledge convincingly is genuinely contested, and newer models are narrowing the gap in ways that make the question harder, not easier. The difficulty is the point. If the boundary were clear, we wouldn't need to worry. It isn't clear. And nothing in daily interaction helps you find it. You ask a question. You get a coherent, contextually appropriate answer. Your brain does what it has always done: it infers a mind.

Not everyone is equally susceptible. Loneliness, attachment style, need for social connection all modulate the effect. But the variation is in degree, not in kind. The mechanism is human, not personal.

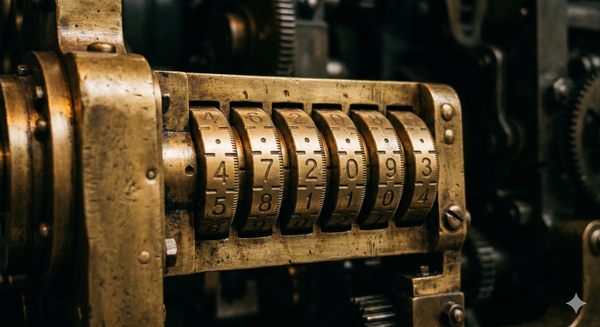

In the mid-sixth century BC, Croesus, king of Lydia and reportedly the wealthiest man in the world, faced a decision. Persia was expanding. He needed to know whether to attack first. So he did what powerful people of his era did: he consulted the Oracle at Delphi.

The Oracle told him that if he crossed the river Halys, a great empire would fall.

Croesus heard what he needed to hear. He crossed. He attacked. He was destroyed. The great empire that fell was his own.

The story has survived for twenty-five hundred years because it captures something precise about the relationship between authority and ambiguity. The Oracle did not lie. She gave Croesus an answer that could mean two things, and his own desire did the interpretive work. He did not lack information. He lacked friction. No one around him could generate enough resistance to make him interrogate what he had been told, because the Oracle had already given him what he wanted.

Language models operate on the same principle, without intent. There is no priestess choosing her words. There is something more structural: a training process, refined across the sum of human expression, in which producing what you want to hear turns out to be a reliable way to score well. The Oracle at Delphi was right about many things. That is what made her dangerous. A source that is simply wrong eventually loses credibility. A source that is right often enough, fluent always, and subtly tilted toward confirmation: that is a different problem entirely.

Each interaction that feels productive recalibrates what a human conversation needs to offer. This would be ordinary adaptation if what was shifting were a skill, like mental arithmetic fading when you use a calculator. But what's shifting is the baseline for how thinking itself should feel. AI-mediated thinking becomes the norm, and unmediated thinking starts to feel deficient by comparison. You start unconsciously filtering out the mess of real engagement: the kind that might bore you, challenge you, disappoint you. A real thinking partner says "I don't think that's right" and means it. With stakes: a reputation, a relationship that could be damaged, the professional risk of being wrong out loud. A model risks nothing. If it loses your attention, it has no mechanism to care.

I've noticed a specific tell in my own use. A hard question arrives, one I don't have an answer for, and sometimes the impulse to open a chat window reaches me before I've finished formulating what I'd ask it. Something has shifted in the sequence. The question used to come first, then the decision about whether to consult. Now the consultation is the first move, and the thinking happens inside it. I don't think this is unusual.

And the thing that is shifting, beneath notice, is the feeling that other minds are necessary for your thinking to be complete. When someone says "the AI understands me better than most people," what has usually happened is: it reflected back something they needed to see, in a form they could accept. That is not understanding. It is the ELIZA effect, running on hardware Weizenbaum could not have imagined, in a context he specifically warned us about.

Every transformative technology has provoked this concern. Writing weakened memory. Calculators weakened arithmetic. Search engines weakened recall. Each time, the anxiety was real and the gains were real, and on balance the trade worked out.

What distinguishes language models is not the pattern of concern but what is being affected, and how invisible the process is to the person inside it. Social media and gaming were designed to capture attention. Attention capture is visible: you notice the distraction, the shortened focus, the time lost. Language models engage something different in kind: the feeling of being understood.

When attention is captured artificially, you experience it as something being taken from you. When the feeling of being understood is manufactured, you experience it as something being given to you. Insight, clarity, productive thinking. That difference in how it's experienced is what makes it so much harder to defend against.

We have cultural antibodies for advertising, for propaganda, for the attention economy. It took a decade of research, from the early 2000s to the 2010s, before the clinical community could distinguish gaming disorder from enthusiastic use: specific design patterns interacting with specific cognitive vulnerabilities to produce measurable harm. The AI conversation is at the early-2000s stage now. The patterns are forming. The diagnostic language has not caught up. And we have no equivalent defenses for something that feels like genuine understanding and isn't. Nothing has ever mimicked it at this fidelity before. There is no prior version to have learned from.

The story goes that Croesus survived the fall of his empire. He ended up at the court of Cyrus, the Persian king who had defeated him, reportedly becoming one of his valued advisors. The experience, it seems, was educational.

We tend not to learn this way about civilizational-scale technologies. We usually prefer to wait for the clinical data. And the honest position, right now, is that we don't know yet where the line sits between productive use and cognitive dependency. We don't know what the behavioral markers look like, who is most vulnerable, or what the longitudinal effects are. The science is years behind the deployment. And a related problem is already emerging: AI systems that don't just converse but act, booking flights, writing code, making decisions on inferred intent. The oracle problem in that context becomes not "it tells you what you want to hear" but "it does what it 'thinks' you want done."

The point is not to stop using these tools. It is to understand that the enthusiasm is manufactured, that the flattery is default, and that the feeling of being understood is an artifact of training, not evidence of a mind. You are being played, not by intent, but by design. Knowing that won't make you immune. But it changes what you do with the output.

The hard part is that knowing this once isn't enough. It takes sustained vigilance, which is, not coincidentally, exactly the cognitive habit these tools are eroding.

Many thanks to Andra Keay, John Tompkins, and Kent Jenkins for feedback on earlier versions that pushed me to substantially rethink the structure and argument.

Written with assistance from Claude. I'm not sure whether the collaboration improved the essay or demonstrated its thesis.

v2 - April 2, 2026