Why Deep Tech Founders Need a Different Playbook: The Risk Stack

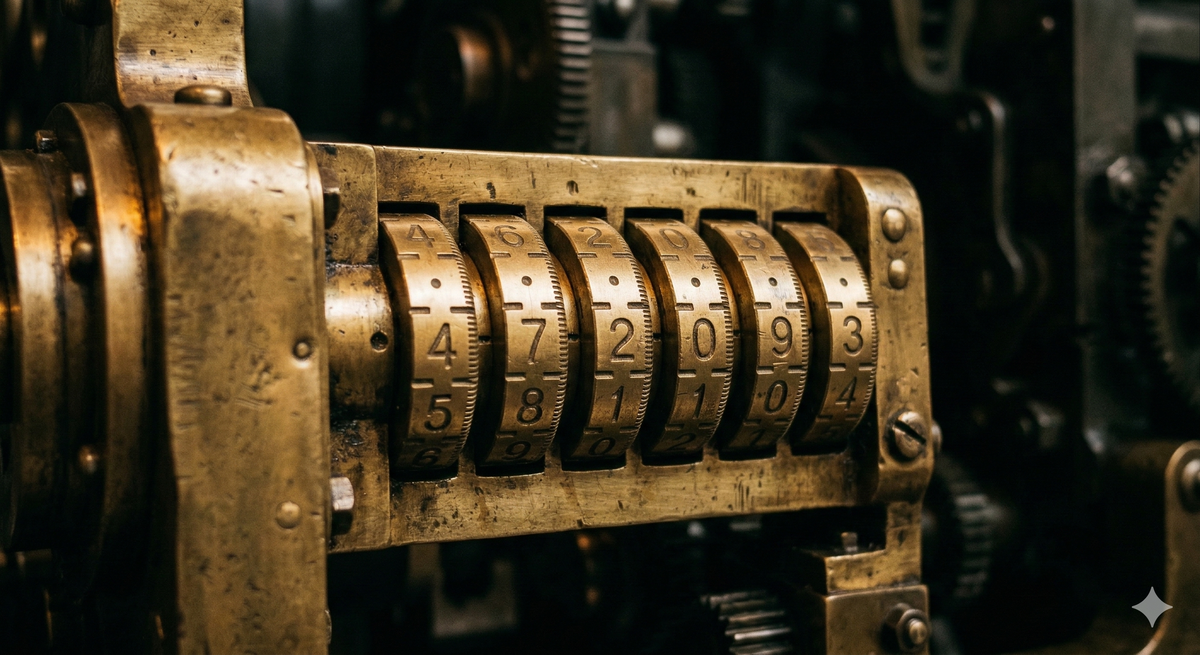

Deep tech risk isn't higher than software risk. It's structurally different. Think of it as a combination lock: six independent tumblers that all have to align, where getting five out of six right still means failure.

Why the software model will kill your robotics company — and what the founders who succeed do instead.

I keep having the same conversation.

A founder with genuine technology (real science, real breakthrough, the kind of thing that makes you lean forward in the pitch meeting) walks me through their plan. And somewhere around slide eight, I realize they're running the software playbook. Build fast, iterate, find product-market fit, raise the next round. They've read the books. They've watched the YC talks. They've internalized the lean startup gospel so completely that it feels like universal truth.

It's not. It's narrowly true. And narrow truth is the most dangerous kind, because it feels universal right up to the point where it fails. By then you've burned through your runway.

I've watched this pattern across years of working with deep tech founders: robotics, AI, advanced materials, exoskeletons, autonomous systems. The founders who fail aren't usually the ones with bad technology. They're the ones who brought a software map to a deep tech territory. The founders who succeed are the ones who understood, early, that the territory is fundamentally different.

This matters because the technology is worth it. The companies navigating these risks are building things that genuinely change the world: robots that reduce workplace injuries, systems that augment human capability in ways we couldn't have imagined a decade ago, solutions to problems that software alone will never touch. The stakes are higher than a failed app. And so is the payoff.

This essay is about what that difference actually looks like, and what to do about it.

Software risk is a one-dimensional problem

Let's make the implicit model explicit. When a software startup launches, the dominant risk is market risk. Will anyone use this? Will they pay for it? Can you grow fast enough?

The technology risk is minimal. You're assembling known components: frameworks, APIs, cloud infrastructure, open-source tools. The engineering is real work, but it's not uncertain work. Nobody's lying awake wondering whether React will function tomorrow. The physics isn't in question. The chemistry isn't in question. You're building on settled ground.

This means the lean startup playbook works beautifully. Build a prototype fast. Ship it to users. Measure what they do. Learn. Iterate. Each cycle costs almost nothing: a few engineers' time, some server costs. The feedback loop is tight and fast. You can be wrong ten times and still find your way because each attempt costs so little that the cumulative risk stays manageable.

This is admittedly the idealized version. Plenty of software companies face expensive iteration cycles — enterprise platforms with eighteen-month sales cycles, infrastructure plays where switching costs make pivots painful, regulated industries where compliance isn't optional. And some software ventures genuinely do face science-grade uncertainty: novel machine learning approaches where nobody knows whether the model will perform at production accuracy, AI products pushing against capability boundaries that aren't yet understood, systems where the sheer complexity of implementation becomes its own trap — theoretically possible, practically intractable.

The distinction isn't absolute. But the dominant narrative around software startups — the one that fills the books and the accelerator talks and the pitch advice — is built on the cheap-iteration archetype. That's the model founders internalize. And that's the model that breaks when applied to deep tech.

None of this is wrong. For software, it's exactly right. The problem is when founders and investors internalize it as the way startups work rather than the way software startups work. The lean startup methodology is an optimization for a specific risk profile. Apply it to a different risk profile and it doesn't just underperform — it actively misleads.

Deep tech risk is a stack

Here's what's actually happening when you build a robotics company, or a fusion company, or an advanced materials company. You're not facing one dominant risk with cheap iterations. You're facing a stack of categorically different risks, and they compound.

Think of it as a combination lock. Not the kind with a dial, but the kind with six independent tumblers that each have to click into position. Get five out of six right and the lock stays shut. Every tumbler matters. And unlike a software pivot, where you can spin the dial again for almost nothing, each attempt on a deep tech combination lock burns serious capital and time.

The stack looks something like this:

Science risk. Does the underlying physics, chemistry, or biology actually work at the parameters you need? This is the most fundamental layer. If the science isn't in, nothing else matters. You can have a brilliant team, a massive market, and a beautiful pitch deck, and if the science doesn't hold, you have nothing. For some companies, this is settled before they start — they're commercializing proven research. For others, there's still a genuine question about whether the phenomenon scales, or whether the performance curve bends the right way. The critical discipline is honesty about which camp you're in.

Engineering risk. Can you build it reliably, repeatedly, outside a lab? The gap between a working prototype and a deployable product is where deep tech companies go to die. Lab conditions are forgiving. The real world is not. Temperature ranges, vibration, dust, user error, edge cases that never appeared in controlled testing. Engineering risk is the translation problem from "it works" to "it works everywhere, every time."

Manufacturing and cost risk. Can you build it at a price point that makes commercial sense? This is the one that catches founders who've spent years in research environments where unit cost is irrelevant. A robotic system that costs $500,000 to hand-build in the lab is a science project. The same system manufactured at $50,000 is a product. At $15,000, it's a market. The manufacturing question isn't just "can we build more?" — it's "can we build more at a cost that leaves room for a business?"

Supply chain risk. Can you actually source what you need, reliably and at scale? Deep tech products depend on specialized components, custom materials, and niche suppliers in ways software never does. A single-source dependency on a sensor fabricated in one country, an export-controlled material, or a chip with a twelve-month lead time can stall production entirely. Tariffs, trade restrictions, and geopolitical disruption aren't abstractions for deep tech founders. They're line items. The founders who get caught are the ones who designed the product in the lab without asking where every component comes from, who else makes it, and what happens when that source disappears.

Market risk. Will someone actually pay for this? And here's the trap that's specific to deep tech: the technology is often genuinely impressive, so you get a lot of sincere interest that never converts. People love watching a robot walk. They love seeing an exoskeleton lift. That amazement is not a purchase signal. The question is always: will they reorganize a procurement process, allocate budget, and sign a purchase order? Interest is cheap. Budgets are real.

Regulatory and deployment risk. Can you actually put this in the world? Medical devices need FDA clearance. Autonomous vehicles need regulatory frameworks that may not exist yet. Industrial robots need safety certifications. Some deployment environments (hospitals, construction sites, public roads) have constraints that can reshape your entire product architecture. This risk often sits ignored at the bottom of the stack until it suddenly becomes the only thing that matters.

Here's the critical insight: these risks don't add. They multiply. Think of each risk dimension as a probability of success. If your chance of success on any single dimension drops to zero, the entire venture goes to zero, regardless of how strong everything else is. The science doesn't work? Zero. Can't manufacture at cost? Zero. Regulatory path blocked? Zero. The math is unforgiving. A software startup with 80% confidence on its one big question (market fit) has a good shot. A deep tech startup with 80% confidence on each of six independent dimensions has a combined probability of about 26%. Same confidence level per question, radically different outcome.

And you can't iterate your way through it cheaply. Every cycle burns real money and real time: every prototype revision, every manufacturing run, every field trial. Months, not days. Hundreds of thousands, not hosting fees. The lean startup loop of build-measure-learn becomes build-spend-pray when each loop costs a million dollars and takes six months.

What the good founders do

The founders who navigate this aren't smarter than the ones who don't. They're more honest. They can name every tumbler on their combination lock, they know which ones are still uncertain, and they have a strategy for addressing them in the right order.

First: understand the full stack. Most founders can't name all their risks. That's the first problem. They're deep in the technology (which makes sense, that's their expertise) and they've pattern-matched the business side against whatever startup narrative is currently dominant. Ask them about science risk and they'll talk for an hour. Ask them about manufacturing cost structure at scale, or regulatory pathway timing, or who specifically signs the purchase order at their target customer, and you get hand-waving. If you can't name the risk, you can't reduce it.

Second: do the cheap things first. This is the single most important operational principle in deep tech. Before you commit to expensive engineering, validate what can be validated without building. Customer discovery costs almost nothing compared to a prototype iteration. Regulatory pre-consultation is cheap compared to discovering your architecture doesn't meet safety requirements after you've built it. The sell-then-build discipline is the highest-leverage activity a deep tech founder can engage in: proving that a buyer exists, at a price that works, for a product you can describe but haven't fully built.

Third: sequence ruthlessly. Think of it as climbing a mountain ridge in fog. You can't see the summit. You may never have seen the summit. But each step should prove you're still gaining altitude. If you can't confirm that the step you just took was upward, if the data from this milestone doesn't tell you anything about the next one, you're wandering, not climbing. And in fog, wandering kills.

The sequencing discipline means: don't solve the expensive problem before confirming the cheap one has an answer. Don't build the manufacturing line before you have purchase commitments. Don't engineer the product before you've validated the science scales. Don't hire the deployment team before you've confirmed the regulatory pathway. Each milestone should eliminate a risk factor and reveal the next one clearly enough to decide whether to keep climbing.

A pattern I've seen more than once. A team comes out of a major research lab with a robotics breakthrough. The science is definitively in. No question the technology works. Their first instinct is the most sympathetic market: healthcare. Helping patients recover mobility. Assisting elderly people with daily tasks. Noble goal, obvious need.

But look at the risk stack. Medical device certification for something strapped to a vulnerable person: regulatory risk through the roof. The liability exposure is enormous. The buyers are hospitals and aged care facilities operating on razor-thin margins, and price sensitivity kills the cost structure. Insurance reimbursement pathways may not exist yet. They have working technology and a market where five out of six tumblers are jammed.

Now take the same core technology and point it at industrial worker safety. The product requirements drop dramatically: you're working with healthy adults in controlled environments. The buyer becomes clear: safety directors at large companies with quantifiable injury costs and existing budgets for safety equipment. The regulatory path is simpler: industrial safety certification, not medical device approval. The market is enormous: workplace injuries cost billions annually, and companies are actively spending to reduce them. Same technology. Completely different risk stack. The science tumbler hasn't changed. But the market, regulatory, cost, and deployment tumblers all click into place.

That's not a pivot in the software sense of "we tried one thing and tried another." It's a reconfiguration of the entire risk stack. The founders who do this well aren't guessing. They're systematically reducing compounding risk by choosing the path where the most tumblers are already aligned.

The mindset shift

The framework matters, but the real point is the mindset underneath it.

Deep tech founders need to hold two things that feel contradictory: genuine belief in transformative technology, and ruthless analytical honesty about every risk standing between here and commercial reality. The belief gets you through the years of hard work. The honesty keeps you from spending those years walking off a cliff.

The founders who fail are usually the ones who treat belief as sufficient. The technology is so compelling, the breakthrough so real, that they can't metabolize the possibility that commercial viability is a separate question with a separate answer. They conflate "this works" with "this will sell." They hear interest and record it as demand. They see a massive potential market and don't discount it by the probability of actually reaching it.

The investors who fail are the ones on either side of a different divide. Some can't evaluate the technical stack at all, so they rely on social signals and pattern-matching against software metrics that don't apply. Others understand the technology deeply but apply software investment heuristics to a domain where each iteration costs a fortune and pivots mean retooling a manufacturing line. They expect lean iteration speeds, SaaS-style growth metrics, quick pivots.

The founders and investors who get this right share a common trait: they can look at a deep tech opportunity and see the full combination lock. Every tumbler. Every uncertainty. Every dependency. And then they can work through it systematically, cheap things first, hardest questions earliest, each step confirming altitude, without either the naive optimism that ignores the stack or the paralysis that refuses to climb because the summit is invisible.

Deep tech isn't riskier than software. It's differently risky. The playbook for navigating that difference exists. It starts with seeing the stack clearly.

And it's worth the effort. The graveyard of deep tech isn't just a cautionary tale about mismanaged risk — it's a waste of human potential. Every company that dies with working technology is a solution that never reached the people who needed it. Getting this right matters beyond the returns.