The Most Expensive Thing to Forget

What the physical AI hype cycle isn't telling investors about risk

DRAFT

Nobody needs to be sold on deep tech right now.

Something genuine has shifted. Foundation models are crossing into the physical world: robots learning tasks from demonstration rather than being hand-programmed for each one, perception systems that generalize across environments, planning architectures that handle novel situations. This convergence is not a narrative. It is an engineering reality that is changing what these companies can do and how fast they can do it. China has recognized this: hundreds of robotics companies, massive state investment, a manufacturing ecosystem that can move from prototype to production at a speed the West struggles to match. The competitive pressure is real and accelerating.

Capital is responding. Physical AI has become the consensus trade. The enthusiasm is warranted. The underlying technology inflection is genuine, the applications are substantial, and the market opportunity is large.

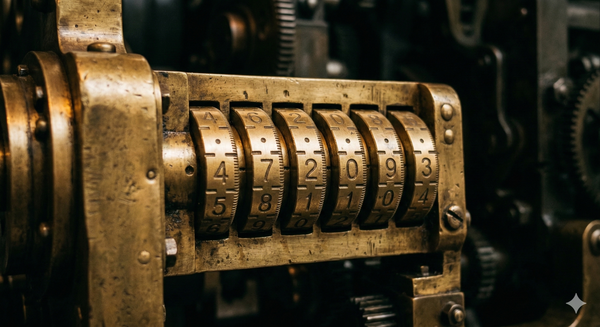

What's not warranted is the assumption that a genuine technology shift makes the underlying risk structure simpler. If anything, it makes disciplined evaluation more important. When the opportunity is real, the cost of getting the execution wrong is higher, not lower. The combination lock that governs deep tech outcomes still has nine tumblers. AI has changed what some of those tumblers look like. It hasn't eliminated any of them. And the competitive pressure from China makes the manufacturing, supply chain, and cost tumblers more consequential for Western companies, not less.

This is not a warning to stay away. It is a framework for knowing what you're buying.

In a previous essay I described deep tech risk as a combination lock: not one big question but nine independent questions that all have to come up right. Science, engineering, manufacturing, supply chain, market timing, regulatory, integration, IP, and team. Get eight out of nine and the lock stays shut.

In a cold market, this math scares people off. If each dimension carries an 80% probability of success, the combined probability is about 13%. Allocators look at that number and flinch.

In a hot market, nobody does the math at all. AI is solving the perception problem. Simulation is accelerating the engineering. Surely this much talent and money pointed at the remaining tumblers will force them open.

Some of them, yes. AI-driven perception and planning genuinely reduce science and engineering risk for certain categories of robotics companies. Sim-to-real transfer compresses iteration cycles that used to take years. These are real advances, and they change the probability on specific tumblers for specific companies.

But they don't touch the others. A humanoid robotics company with a world-class learned control system still has to solve manufacturing cost at scale (most haven't), integrate into real customer workflows (almost none have), build supply chain resilience for specialized actuators and sensors, and answer the question of whether anyone will sign a production purchase order at a price that sustains the business. The AI inflection has moved the science tumbler and parts of the engineering tumbler. It has not moved the rest. Those turn through years of specific, expensive, unglamorous work.

In a hype cycle, the unresolved tumblers are harder to see. Forgiving capital lets companies defer the hard questions. A company that would have been forced to prove manufacturing cost before its next raise can now secure another round on demo momentum alone. The tumbler isn't resolved. It's postponed. And postponed tumblers compound: a manufacturing cost problem discovered at scale is vastly more expensive to fix than one confronted early. China's ability to manufacture at lower cost and iterate faster makes this urgent.

The same compounding that makes undisciplined deep tech investing a portfolio killer makes disciplined deep tech investing extraordinary. The difference is categorical, not incremental.

A team that has definitively proven the science isn't sitting at 80% on that dimension. They're at 98%. A team with signed letters of intent from named buyers with allocated budgets isn't at 80% on market risk. They're at 90% or higher. Move five of nine tumblers from 80% to 95% and the combined probability more than doubles. Move them all to 95% and you're above 60%. These numbers are illustrative, not precise. But the direction is what matters: compounding punishes carelessness and rewards discipline with equal force.

Every tech sector eventually corrects. Cleantech in 2011. Autonomous vehicles in 2022. The specific dynamics differ (subsidy dependence, unsolved technical problems, market timing), but the pattern is consistent: when capital tightens, the companies that survive are the ones that had already opened most of their tumblers. Real revenue from solved problems. Defensible technical moats. Positions that couldn't be replicated by a better-funded competitor in eighteen months. Robotics and physical AI will face their own version of this. The form it takes may differ from cleantech or AV. The underlying selection pressure will not.

Consider two robotics companies in the current market. Both may call themselves humanoid robotics companies. Both may talk about general-purpose capability. The difference is directional.

One built from the specific outward. A decade of university research, successive generations of hardware, each solving real engineering problems. A domestic manufacturing operation. Production contracts with major logistics operators. Robots deployed into real customer workflows. This company earned its way to broader ambitions by opening tumblers sequentially: science, then engineering, then manufacturing, then market, then integration. The general-purpose language describes where they're headed. The open tumblers describe where they are.

The other started from the general inward. A compelling vision, extraordinary demos, and the assumption that specific deployments will follow once the platform is ready. Manufacturing cost at scale is unproven. Customer workflows haven't been tested. Integration challenges haven't surfaced because the robot hasn't left controlled environments. The general-purpose language describes where they want to be. The closed tumblers describe where they are.

These companies each carry significant valuations. On the risk stack, they are categorically different. In a rising market, the difference is invisible. In a correction, it is the only thing that matters.

That difference didn't happen by accident. The company that built from the specific outward was almost certainly led by founders who understood the full combination lock before any investor explained it to them. The best deep tech founders don't need a risk stack framework. They've been living it. They can name every tumbler, they know which ones are open, and they've sequenced the de-risking based on years of hard-won judgment. Some of them arrived at that sequencing by choice. Others arrived at it because capital constraints forced discipline that turned out to be an advantage. Either way, the framework in these essays is articulating what experienced deep tech founders already do.

When a deep tech company opens its lock, what's behind it is different in kind from what most venture-backed software produces. Software can build enormous moats (Microsoft, Oracle, Google are among the most durable competitive positions in business history), but those took decades of ecosystem accumulation. Deep tech moats are built into the process of opening the lock itself. Manufacturing processes that can't be replicated from a whiteboard. Certifications competitors must earn from scratch. Supply chain relationships built through years of co-development, increasingly valuable as geopolitical disruption reshapes component sourcing. These moats exist the moment the tumblers are open, because the work of opening them is itself the barrier.

There is a pattern in which companies survive corrections, and it is not the one most allocator frameworks are built to detect.

A robotics company deploying into warehouse logistics is solving a specific, quantifiable problem: moving goods in environments where labor is scarce, injury rates are high, and the cost of downtime is measurable. The buyer is a warehouse operations director with a budget, a headcount problem, and a procurement process. Or consider powered wearable technology for industrial worker safety. Workplace musculoskeletal injuries cost billions annually. The safety director at a mining company with quantifiable injury costs and an existing budget for safety equipment is a named buyer with allocated budget and a purchase order to sign. The technology (DARPA-origin research, years of validation) targets healthy workers in industrial settings rather than patients in clinical ones.

The pattern: deep tech companies shaped toward specific, measurable problems have clearer buyers, more defensible positions, and more durable revenue. A warehouse operator's labor costs don't disappear in a correction. A mining company's injury rates don't drop because robotics valuations compress. The customers who need the solution still need it when the hype turns.

For allocators, "what specific problem does this company solve, and who is paying for it?" is a better first-pass filter than any technical evaluation.

The current environment creates a specific temptation: allocate broadly to "physical AI" or "robotics" as a sector bet and trust that the rising tide will sort the winners from the losers. This is exactly wrong. Broad sector allocation without risk stack literacy is not diversification. It is a concentrated bet on the hype cycle continuing.

A serious deep tech allocation requires different commitments, starting with GP selection. The gap between a good and bad deep tech GP is wider than in most venture categories. But what makes a good deep tech GP is specific and evaluable. In a GP meeting, an allocator can ask: walk me through how you evaluated the full risk picture on your last three investments. Where was the science proven and where was it still uncertain? What was the manufacturing plan and at what cost? Which customers had committed budget, and which had only expressed interest? How did you help your portfolio companies close the gaps: specific introductions, supply chain connections, customer validation? Where did you challenge a founder's assumptions, and where did you defer to their expertise? A GP who can answer these questions concretely, with examples, is a fundamentally different bet than one who talks about "the physical AI opportunity" in the abstract.

This also works in reverse. The best deep tech founders are evaluating their investors through the same lens. A founder who has lived the combination lock for a decade can tell immediately whether an investor understands it or is pattern-matching from software. The collaboration only works when both sides bring genuine judgment. Bad investor collaboration (board meetings that demand SaaS metrics from a hardware company, pressure to hit milestones that don't map to the risk stack, "helpful" introductions that waste the founder's time) is as common as good, and experienced founders know the difference. The GP's job is not to impose a framework on the founder. It is to recognize a founder who already has one, and to bring resources that accelerate what the founder is already doing.

Deep tech timelines are longer because the problems are harder, not because the teams are slower. An allocator unprepared for a seven-to-ten year fund life with limited early distributions should not be in deep tech. An allocator who is prepared gains access to returns that impatient capital structurally cannot reach.

And when evaluating deep tech funds in a hot market, "what problems do your portfolio companies solve?" is the single most useful filter for separating durable from fragile. Funds whose portfolio companies solve quantifiable problems for identified buyers are structurally better positioned for a correction than funds whose portfolio companies have impressive technology searching for applications. The former have customers who need them. The latter have investors who believe in them. When the cycle turns, the difference is existential.

The opportunity in deep tech is real. The technology is genuine, the applications are substantial, and the structural advantages for companies that open the lock are enormous. None of that is hype.

What is hype is the assumption that the opportunity is easy to capture, that impressive demos are evidence of de-risked businesses, and that abundant capital makes the combination lock simpler. The lock is the same lock it has always been. Nine tumblers. Compounding probabilities. Each one requiring specific, expensive, unglamorous work to resolve.

Hype is a weather pattern, not a climate. The combination lock was there before the enthusiasm arrived, and it will be there after it passes.

Compounding works both ways. In a rising market, that's easy to forget. It's also the most expensive thing to forget.

This essay builds on the Risk Stack framework introduced in "Why Deep Tech Founders Need a Different Playbook."