Why Deep Tech Founders Need a Different Playbook: The Risk Stack

Deep tech risk isn't higher than software risk. It's structurally different. Nine tumblers that multiply, not add. But the same compounding that punishes carelessness rewards discipline. The framework exists.

Why deep tech risk isn't higher than software risk. It's structurally different.

I keep having the same conversation.

A founder with genuine technology walks me through their plan. Real science, real breakthrough, the kind of thing that makes you lean forward in the pitch meeting. And somewhere around slide eight, I realize they're treating deep tech risk as though it works like software risk. It doesn't.

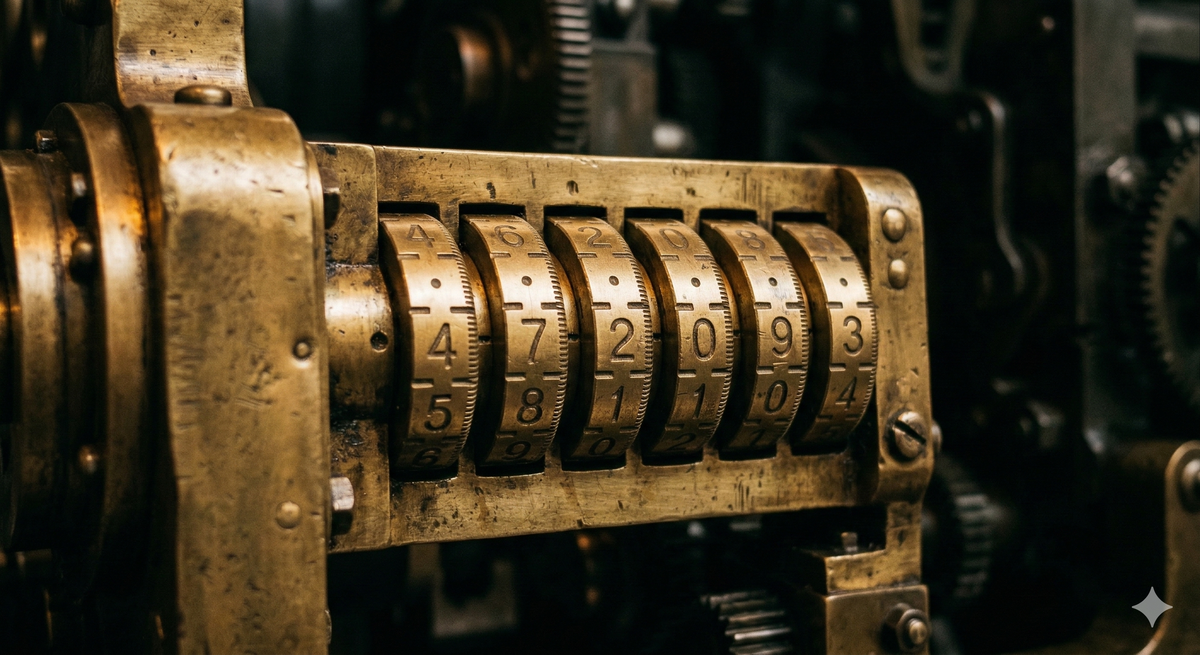

A software startup faces one dominant risk: will anyone buy this? The iteration is cheap, the feedback loop is fast, and you can be wrong ten times and still find your way. Deep tech faces something structurally different. Not one big question but nine independent questions that all have to come up right. Think of it as a combination lock: get eight out of nine and the lock stays shut. And unlike a software pivot, every turn of a deep tech tumbler burns serious capital and time.

The founders who fail aren't usually the ones with bad technology. They're the ones who never saw the full lock.

The tumblers

Here's what's actually happening when you build a robotics company, or a fusion company, or an advanced materials company. No framework captures every risk a specific company will face. But these are the ones I see most consistently, and the ones that most reliably distinguish deep tech from software.

Science risk. Does the underlying physics, chemistry, or biology actually work at the parameters you need? This is the most fundamental layer. If the science isn't in, nothing else matters. You can have a brilliant team, a massive market, and a beautiful pitch deck; if the science doesn't hold, you have nothing. For some companies, this is settled before they start: they're commercializing proven research. For others, there's still a genuine question about whether the phenomenon scales, or whether the performance curve bends the right way. The critical discipline is honesty about which camp you're in.

Engineering risk. Can you build it reliably, repeatedly, outside a lab? The gap between a working prototype and a deployable product is where deep tech companies go to die. Lab conditions are forgiving. The real world is not. Temperature ranges, vibration, dust, user error, edge cases that never appeared in controlled testing. Engineering risk is the translation problem: from "it works" to "it works everywhere, every time."

Manufacturing and cost risk. Can you build it at a price point that makes commercial sense, and can you build it consistently? This is the one that catches founders who've spent years in research environments where unit cost is irrelevant. A robotic system that costs $500,000 to hand-build in the lab is a science project. The same system manufactured at $50,000 is a product. At $15,000, it's a market. But cost is only half the manufacturing question. The other half is consistency. Variance in build quality, calibration drift, assembly tolerances: the difference between a system built by the founding engineer and one assembled by a contract manufacturer following a work instruction document is often the difference between a product that works and one that ships. Companies die in the space between "we can build ten" and "we can build a hundred that all perform identically."

Supply chain risk. Can you actually source what you need, reliably and at scale? Deep tech products depend on specialized components, custom materials, and niche suppliers in ways software never does. A single-source dependency on a sensor fabricated in one country, an export-controlled material, or a chip with a twelve-month lead time can stall production entirely. Tariffs, trade restrictions, and geopolitical disruption aren't abstractions for deep tech founders. They're line items. The danger is designing the product in the lab without asking where every component comes from, who else makes it, and what happens when that source disappears.

Market and timing risk. Will someone actually pay for this, and will they be ready when you need them to be? Deep tech has a specific trap here: the technology is often genuinely impressive, so you get a lot of sincere interest that never converts. People love watching a robot walk. They love seeing an exoskeleton lift. That amazement is not a purchase signal. The question is always: will they reorganize a procurement process, allocate budget, and sign a purchase order? Interest is cheap. Budgets are real.

And timing compounds the problem in both directions. Your technology may be ready in 2026, but if the buyer's procurement cycle, regulatory environment, or cultural readiness doesn't arrive until 2029, you've burned three years of runway being right but early. The reverse is just as dangerous and more common in deep tech: the customer wants the product now, but your development cycle means it won't be ready for two or three or more years. Will that customer still be there? Will they have solved the problem another way, hired more people, restructured the workflow, or simply moved on? A letter of intent signed in enthusiasm today is not a purchase order honored in 2028. The window between customer urgency and product readiness is where deep tech demand goes to die. Being right about the technology and wrong about the timing, in either direction, is one of the most expensive ways to fail in deep tech.

Regulatory and deployment risk. Can you actually put this in the world? Medical devices need FDA clearance. Autonomous vehicles need regulatory frameworks that may not exist yet. Industrial robots need safety certifications. Some deployment environments (hospitals, construction sites, public roads) have constraints that can reshape your entire product architecture. This risk often sits ignored at the bottom of the stack until it suddenly becomes the only thing that matters.

Integration risk. Can you make it work inside the customer's world? This is the risk that doesn't appear in the lab, the demo, or the trade show. Your robot works. Your customer's factory works. Putting your robot in your customer's factory breaks both. Integration with existing workflows, legacy systems, physical infrastructure, and human processes is responsible for more stalled deployments than any single tumbler. The customer's MES doesn't talk to your API. The floor layout requires a reconfiguration nobody budgeted for. The operators who were supposed to supervise the system treat it as a threat. A product that works perfectly in isolation and fails on contact with real operating environments is not a product. It's a prototype that escaped the lab.

IP and defensibility risk. Can you protect what you've built? In software, defensibility usually comes from network effects, switching costs, or data moats. In deep tech, it often comes from patents, trade secrets, and freedom to operate. A company that doesn't own its core IP cleanly, or discovers a blocking patent held by a university or a large player, faces an existential risk. And the offensive side matters just as much: your IP may be the only thing preventing a well-capitalized incumbent from replicating your work once you've proven the market exists. The founders who get caught are the ones who assumed the science was the moat. Science published in a journal is science available to everyone. The moat is the specific implementation, the process knowledge, and the legal protection around it.

Team risk. Do you have the right people, and can you keep them? Every startup faces this, but deep tech faces a version that's categorically harder. A software startup needs engineers who can ship product. A deep tech startup needs a research scientist who understands the core phenomenon, mechanical and electrical engineers who can translate lab to product, a manufacturing specialist, someone who understands the regulatory pathway, and someone who can sell a product category that may not have existed before. The talent pool for each of these is thin, the combination is rare, and losing any one of them can stall the company for months.

Add to this the co-founder problem. Deep tech co-founders often have fundamentally different relationships to the technology itself. One sees it as their life's work: identity-level attachment to the science, years of doctoral research, a sense that this is what they were put on earth to do. The other sees it as a means to a commercial outcome. That divergence doesn't surface in the first year, when everything is about proving the technology works and both founders are aligned. It surfaces in year three, when the company needs to make compromises the science founder experiences as betrayal: cutting a feature that's technically beautiful but commercially irrelevant, pivoting away from the original application, licensing the IP instead of building the product. Co-founder conflict kills companies in every sector. In deep tech, where the emotional stakes are higher and the journey is longer, it kills more and more painfully.

That's nine tumblers. It looks like a catalog of doom. It isn't. No company faces all nine at full uncertainty. A team commercializing proven science with an identified buyer in an existing regulatory framework might have only two or three genuinely open questions. The framework is a diagnostic tool, not a death sentence. The point is knowing which tumblers are already resolved, which are still open, and what it will take to close them.

And not every company needs to open the full lock. For founders building toward a technology acquisition or IP license rather than full commercial scale, the framework is equally useful but the question changes: which tumblers make you valuable to an acquirer, and which ones are you deliberately leaving for them to solve? The discipline is the same. Prove the science, protect the IP, demonstrate enough engineering to be credible, and don't burn capital on manufacturing scale or market development that the acquirer will handle with their own resources. Over-investing in tumblers the acquirer doesn't need is as wasteful as ignoring the ones they do.

The math, the capital problem, and why it's worth it anyway

The compounding math works both ways.

Think of each tumbler not as a risk but as a probability of success: the chance that you'll get that dimension right. If your probability of success on any single dimension drops to zero, the entire venture goes to zero, regardless of how strong everything else is. Science doesn't work? Game over. Can't manufacture consistently? Game over. Integration fails? Game over. And the probabilities multiply, not add. A deep tech startup with 80% chance of success on each of nine unmanaged dimensions has a combined probability of about 13%. That sounds brutal, and taken naively it is.

But the same compounding that punishes carelessness rewards discipline. A team that has definitively proven the science, locked in a manufacturing partner, validated a real buyer, and secured freedom to operate isn't sitting at 80% on those dimensions. They're at 95% or higher. Move five of nine tumblers from 80% to 95% and the combined probability more than doubles. Move them all to 95% and you're above 60%.

And when the lock opens, what's behind it is worth the effort. Deep tech that reaches commercial scale produces structural advantages software companies can never build: physical moats, regulatory moats, manufacturing moats, and market positions that take years and hundreds of millions of dollars to replicate. The leverage is enormous. The returns, for investors who understand the risk profile, reflect that leverage.

The catch is that every turn of a tumbler costs real money and real time. Months, not days. Hundreds of thousands, not hosting fees. Which makes the dependencies between tumblers even more consequential.

The tumblers aren't independent. A supply chain constraint forces an engineering redesign, which changes the cost structure, which reshapes the market you can address, which alters the regulatory pathway. A key person leaves and the IP strategy stalls, which delays the fundraise, which compresses the timeline for everything else. The combination lock metaphor is useful but generous: a real deep tech risk stack is less like independent tumblers and more like interlocking gears, where turning one moves others sometimes in ways you didn't plan for.

For hardware companies with significant software components (and most modern deep tech companies are: a robotics company is typically 30-60% software by engineering headcount), the interlocking is even more pronounced. Perception, planning, control, fleet management, over-the-air updates: these carry their own versions of science risk (will this perception model generalize?), engineering risk (can we ship reliable OTA updates?), and team risk (can we recruit the right ML engineers for robotics). The risk stack for a company building physical AI is really two interlocked stacks, hardware and software, and treating it as one underestimates the complexity.

This is where capital becomes the constraining reality. Capital is not a tenth tumbler. It's the resource you spend turning all the others. The fundraising environment for deep tech is structurally different than for software: longer timelines, fewer investors who understand the risk profile, milestones that don't map to SaaS metrics.

And founders often don't have complete freedom to choose which tumbler to turn next. What investors will fund at each stage imposes its own cadence. Companies at the center of a hype cycle (physical AI today, quantum computing before that) get more forgiving capital, longer runways, and more bites at the cherry. The discipline is to use that window to de-risk aggressively while the money is patient. But hype is a weather pattern, not a climate, and building a company that depends on continued hype-cycle funding is its own form of risk mismanagement. The founders who run out of money rarely run out because they spend too much. They run out because they spend it in the wrong order.

What de-risking looks like in practice

The founders who tend to navigate the full stack share a few habits I've come to watch for.

Name the full lock. Most founders can articulate their science risk with precision; that's their expertise. The gaps tend to appear everywhere else. Ask about manufacturing cost structure at scale, or who specifically signs the purchase order at their target customer, or what happens when their lead engineer leaves, and you get vaguer answers. This is not a failing. It's a natural consequence of spending years deep in the technology. But the risks you can't name are the ones you can't reduce.

Do the cheap things first. Before committing to expensive engineering, validate what can be validated without building. Customer discovery costs almost nothing compared to a prototype iteration. Regulatory pre-consultation is cheap compared to discovering your architecture doesn't meet safety requirements after you've built it.

A caveat: for genuinely novel product categories, "cheap validation" is harder than it sounds. If the buyer has never purchased this kind of thing, they often can't evaluate a description. They need to see it, touch it, sometimes fail with a prototype before they understand what they're buying. The principle still holds, but the practice shifts: build just enough for the buyer to understand what they'd be buying. Use proxies, people with deep customer domain experience who can bridge the gap between a technology description and a procurement decision. This is one reason deep tech in B2B markets has a structural advantage: the buyers are professionals with quantifiable problems and existing budgets. The goal is to compress the confidence interval on market risk as far as you can before you've spent your engineering budget. Hope begets a very wide confidence interval.

Sequence the heavy spend. This doesn't mean ignoring future tumblers until the current one is resolved. You should always be learning about what's ahead: understanding the engineering constraints while validating the science, scoping the regulatory pathway while building the prototype, talking to manufacturers while the design is still evolving. What it means is not committing serious capital to a problem that depends on an unresolved earlier one. Don't build the manufacturing line before you have purchase commitments. Don't pour engineering budget into a product before you've confirmed the science scales. Don't hire the deployment team before you've confirmed the regulatory pathway. File provisional patents before publishing. Lock in your key people before the long grind starts. Each milestone should reduce a risk factor or reveal the next one clearly enough to decide whether to keep going. A complication: the ideal de-risking order and the fundable de-risking order are not always the same. Knowing how to present the minimum capital required to reach each next milestone is a core founder skill that rarely gets taught.

An example: A team comes out of a major research lab with a robotics breakthrough. The science is definitively in. No question the technology works. Their first instinct is the most sympathetic market: healthcare. Helping patients recover mobility. Assisting elderly people with daily tasks. Noble goal, obvious need.

But look at the risk stack. Medical device certification for something strapped to a vulnerable person: regulatory risk through the roof. The liability exposure is enormous. The buyers are hospitals and aged care facilities operating on razor-thin margins, and price sensitivity kills the cost structure. Insurance reimbursement pathways may not exist yet. They have working technology and a market where most of the tumblers are jammed.

Now take the same core technology and point it at industrial worker safety. The product requirements drop dramatically: you're working with healthy adults in controlled environments. The buyer becomes clear: safety directors at large companies with quantifiable injury costs and existing budgets for safety equipment. The regulatory path is simpler: industrial safety certification, not medical device approval. The market is enormous: workplace injuries cost billions annually, and companies are actively spending to reduce them. Same technology. Completely different risk stack. The science tumbler hasn't changed. But the market, regulatory, cost, and deployment tumblers all click into place.

That's not a pivot in the software sense. It's a reconfiguration of the entire risk stack. The founders who do this well aren't guessing. They're systematically reducing compounding risk by choosing the path where the most tumblers are already aligned.

The mindset shift

The framework matters, but the real point is the mindset underneath it.

Deep tech founders need to hold two things that feel contradictory: genuine belief in transformative technology, and ruthless analytical honesty about every risk standing between here and commercial reality. The belief gets you through the years of hard work. The honesty keeps you from spending those years walking off a cliff.

The failure mode I see most often isn't bad technology. It's treating belief as sufficient. The technology is so compelling, the breakthrough so real, that the possibility of commercial failure becomes hard to metabolize. "This works" slides into "this will sell," "this will scale," "this will integrate." Each tumbler gets the same unearned confidence. Interest gets recorded as demand. A massive potential market goes undiscounted by the probability of actually reaching it. I've watched this happen from the other side of the table and recognized it too late more than once. The investor version of the same failure is subtler but no less damaging: you see the technology clearly, you understand the stack, and you fund the company anyway because the science is too exciting to walk away from, without forcing the hard conversation about which tumblers are still genuinely open. Belief is contagious. It is supposed to be. The discipline is knowing when you've caught it.

Investors bring their own version of the problem, whether it's pattern-matching against software metrics, expecting lean iteration speeds in a domain that doesn't permit them, or funding on social signal in place of technical evaluation. The ones who help their founders navigate the stack (understanding which tumbler to turn next, helping sequence the de-risking, making introductions that resolve supply chain or customer validation risks) don't do it from a position of superior clarity. They do it because the view from outside the company is often materially different from the view inside it, and the combination of both views is what produces good decisions.

The tension between belief and analytical honesty doesn't resolve. It sits there, through every board meeting, every fundraise, every decision about where to spend the next six months of runway. The founders and investors who navigate deep tech well are not the ones who have found the balance point. They're usually the ones who keep recalibrating, decision by decision. The recalibration is the discipline. It doesn't end.

Deep tech isn't riskier than software. It's differently risky, and the framework for navigating that difference exists. It starts with seeing the stack clearly.

And it's worth the effort. The graveyard of deep tech isn't just a cautionary tale about mismanaged risk; it's a waste of human potential. Every company that dies with working technology is a solution that never reached the people who needed it. Getting this right matters beyond the returns.

Thanks to Andra Keay, John Tompkins, and Kent Jenkins for invaluable feedback along the way.

V2.0 - April 2, 2026